Situational Intelligence for the Moon

Artificial Intelligence is helping us understand and assist in humanity’s return to the Moon. As we enter this new era of sustained lunar activity, the need for intelligent systems that can interpret, predict, and coordinate across diverse data sources in the Lunar environment will be a keystone capability.

For more than a decade, the Frontier Development Lab (FDL) has operated at the intersection of AI, space science, and planetary intelligence. In collaboration with the Luxembourg Space Agency (LSA), our applied research has delivered a series of firsts: from the first-ever view into the Lunar Permanently Shadowed Regions (PSRs), to lunar localisation without GPS, thermal anomaly maps and many others. Each milestone has advanced the broader goal of building resilient, reasoning systems capable of operating where latency, bandwidth, and uncertainty are inherent.

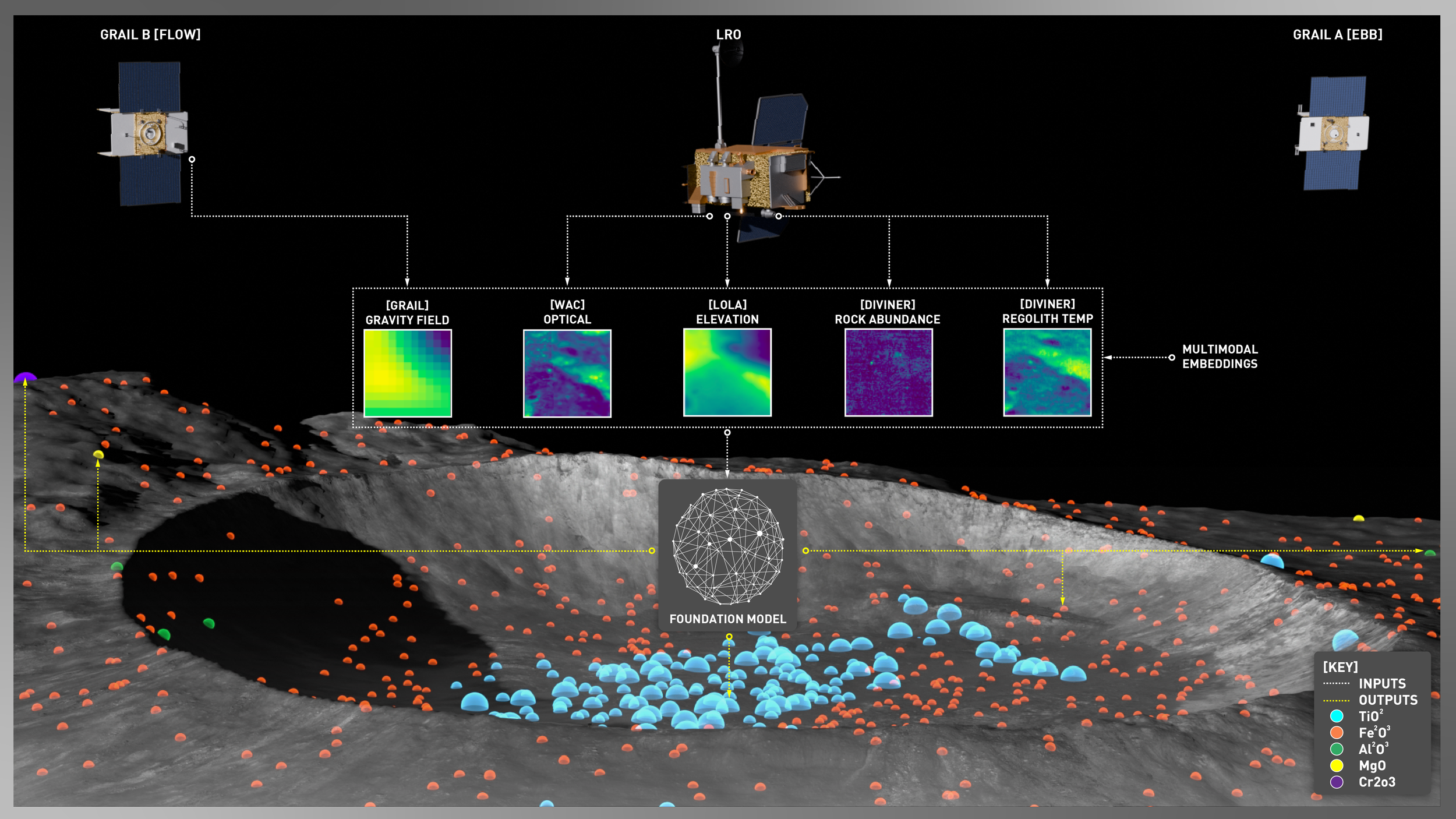

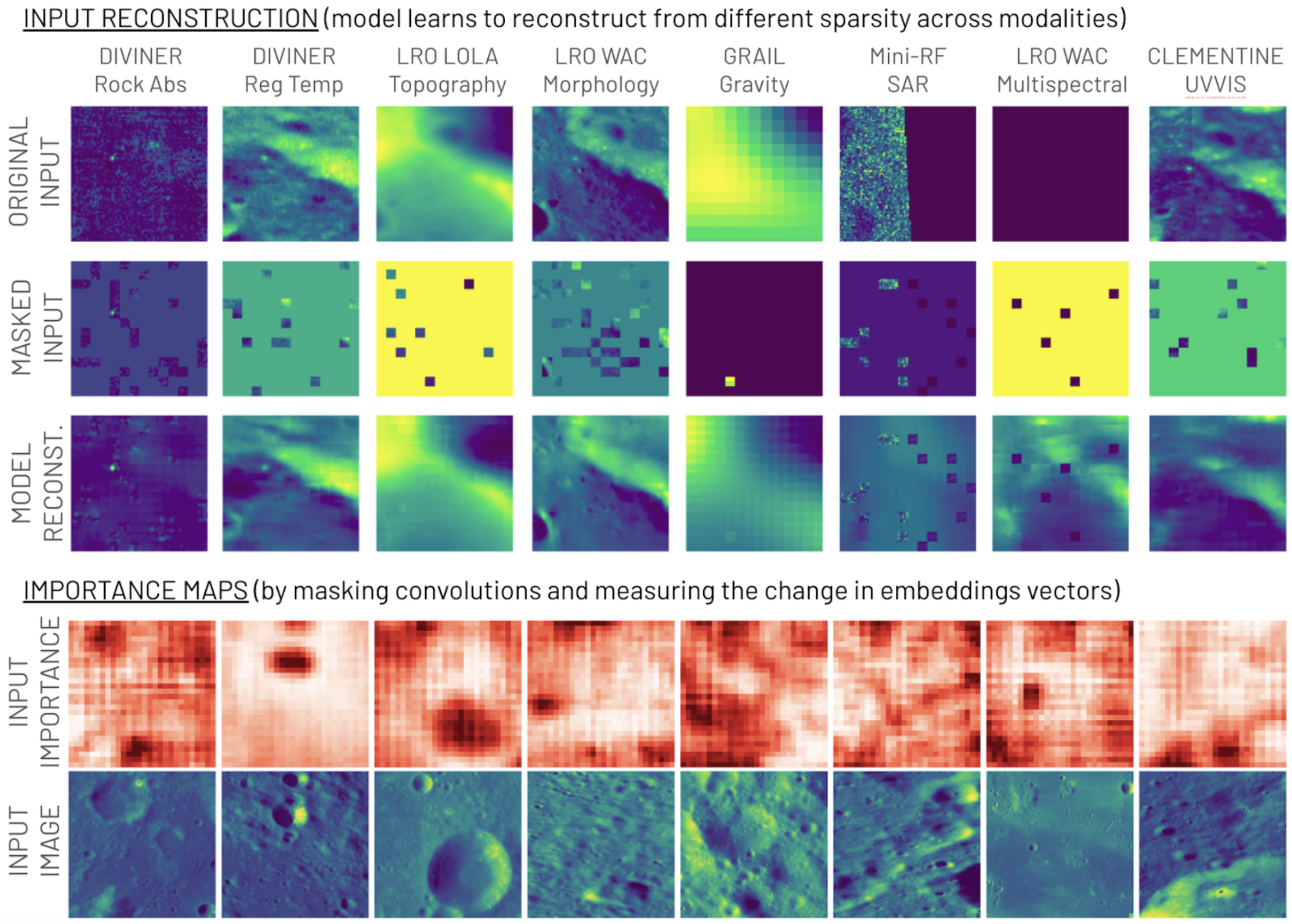

In 2025, we are extending this lineage with the development of Lunar-FM, the world’s first Lunar Foundation Model, designed to unify and interpret the Moon’s heterogeneous data with an initial focus on lunar resources.

Lunar-FM integrates inputs from multiple spacecraft into a single multimodal architecture. This fusion allows the model to aggregate a representation of the lunar environment that can be queried in natural language for the first time.

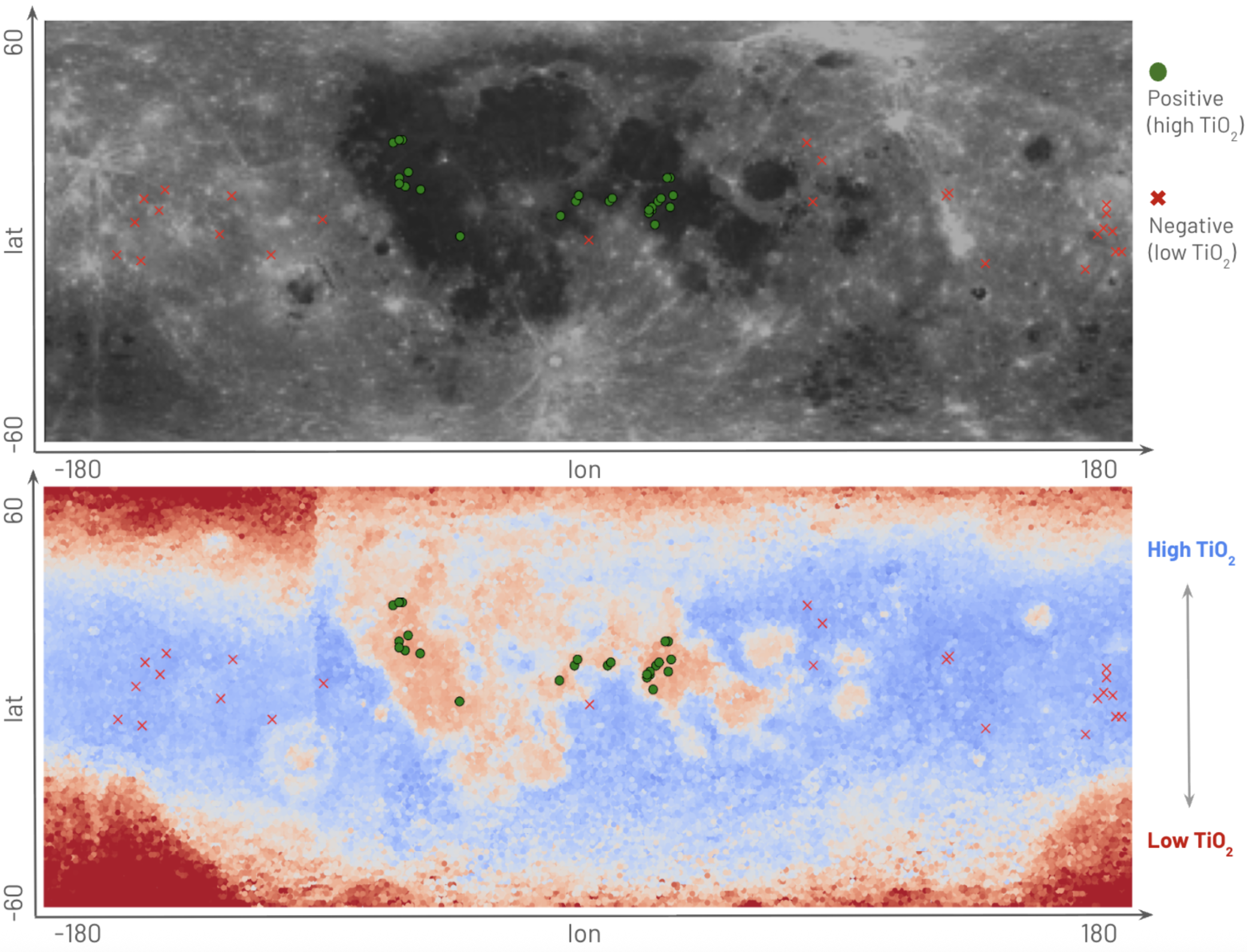

As on Earth, the most transformative aspect of the emergence of foundation models is multi-modal reasoning. By adapting transformer-based architectures originally developed for natural language understanding, we are enabling models that can converse with complex data, effectively turning lunar datasets into queryable knowledge systems. A scientist or engineer could ask, “Which candidate sites show the most stable illumination for power generation?” and receive an interpretable, physics-informed response supported by visualisation and uncertainty estimates. This first model is designed for resource related queries. Future versions of Lunar-FM will unlock more granular capabilities, supporting surface operations and the science goals of NASA’s Artemis mission.

Together, hybrid observation, onboard learning, and multi-model reasoning in natural language define a new discipline: Lunar Systems Intelligence - an intelligence architecture for continuous, adaptive understanding of the Moon as a complex, evolving system.

The implications for stakeholders such as the Luxembourg Space Agency and ESRIC are significant. A model like Lunar-FM can directly support lunar resource characterisation, ISRU (In-Situ Resource Utilisation) feasibility assessment, and infrastructure planning, from water ice mining to power distribution, by providing probabilistic, explainable insights at the speed of mission operations.

Lunar-FM represents the beginnings of a Lunar Operating System for exploration and utilization; an AI substrate capable of linking simulation, sensing, and decision-making in a single adaptive loop. In doing so, it lays the groundwork for the next phase of humanity’s multi-planetary future where intelligent systems don’t just observe the Moon, but help us stay there for good.